Explore the story of the first computer, learn when the computer was invented, and trace key milestones from Babbage’s 1800s machines to Colossus and ENIAC.

The First Computer: What it Was and When It Was Invented

Some inventions come about before their time and seem to disappear into the vacuum of history overnight. Never to be seen again for ages. Like solar cells, which were first invented in 1883 by Charles Fritts, but never gained mass popularity until over a century later. Then there’s something closer to home, the computer. Despite what many think, computers weren’t invented in the 1940s. In the 1940s computers did start looking more like the computers we know today. They were built off an existing foundation. Upon the shoulders of the engineers that went before them.

Elevate your design and manufacturing processes with Autodesk Fusion

What Was the First Computer?

The title of “first computer” can refer to several inventions. Charles Babbage designed the Analytical Engine in the 19th century, which introduced key concepts of modern computing but was never built in his lifetime. In the electronic era, the Atanasoff–Berry Computer (1942) performed digital calculations using binary, while the ENIAC (1945) was the first general-purpose electronic computer, capable of being reprogrammed to solve different problems, marking the true beginning of computer technology.

The Land Before Bits and Bytes

Back before computers were even associated with mechanical devices, the word “computer” was first in use in 1613 as a label for a person that performed calculations. And this definition would stick to its human counterpart for over three centuries until the 1800s arrived. It was during this time, 1822 to be exact, when English mathematician, philosopher, and inventor Charles Babbage first introduced the concept of the computer, only he called it the Difference Engine.

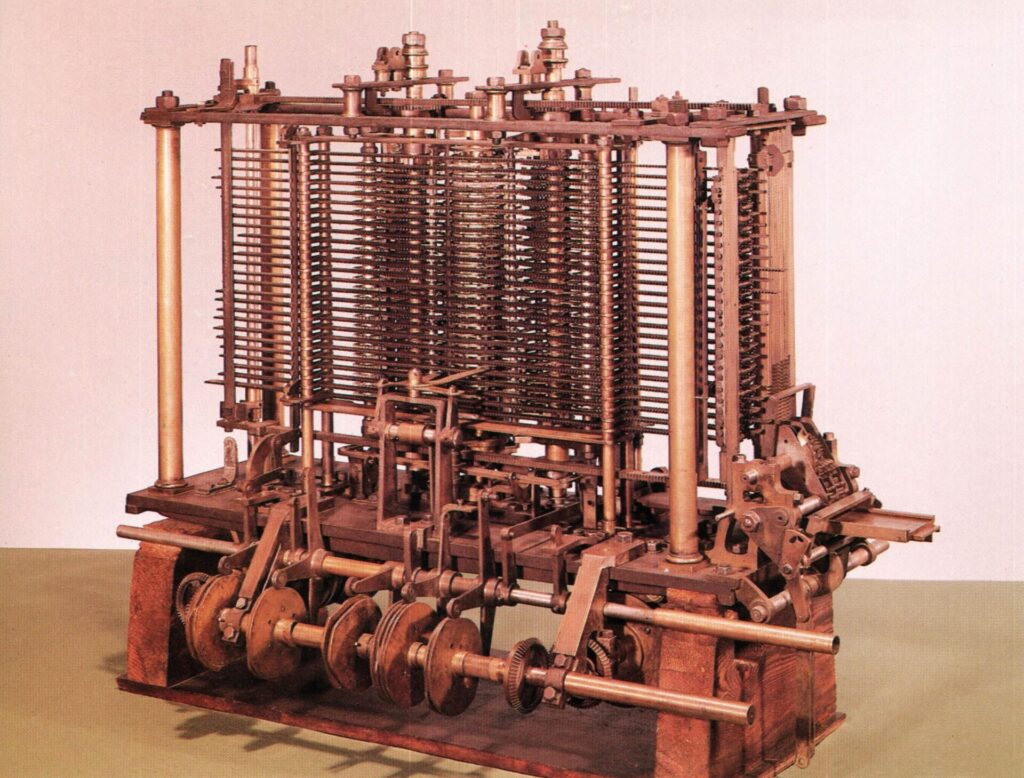

This Difference Engine was 100% mechanical, capable of only computing numbers and making a note of its results on physical materials. The limitations were pretty clear to Babbage, and to make the leap between doing simple calculations to some beefy computations, Babbage was going to need a more general-purpose tool. And as so funding for Babbage’s project started to dry up from the British government, the famous inventor turned his sights onto something bigger, a general-purpose computing machine that he called the Analytical Engine.

This Analytical Engine was, by all means, the foundation for the digital computers that we know and use today. While it was still mechanical in nature, it had a ton of systems inside that perfectly matched today’s technology, including:

- A Store that acted as the memory that we use in computers today that could store numbers and the results of calculations.

- A Mill that would be equivalent to today’s Central Processing Unit (CPU) found on every computer from desktops to smartphones that perform arithmetic calculations.

- Flow Control which is still in use in today’s programming environments to do things like conditional branching, looping, parallel processing, latching, and polling.

- Outputs that were used to print the results of programmed computations on physical materials like punch cards that we’ve now replaced with monitors.

Early Arrival

As you can see, Babbage laid the groundwork to the computers of our digital age, and it could all be programmed. This computer would take input in the form of a program, do the heavy lifting computations with its mill, store those results in memory, and output them on a physical medium. All of these fundamental processes are how today’s computers work, but Babbage was a hundred years ahead of his time! But Babbage wasn’t alone in his ingenuity. He had a partner who understood his inventions just as deeply and saw the future of their possibilities with programming.

Her name? Ada Lovelace.

Enchanting Numbers

To understand Ada Lovelace, who is considered by the world of computer science to be the first programmer, you first have to understand her parents. Ada was the daughter of the famous poet and renowned writer Lord Byron. If there’s anything to know about this man, it’s that he had some violent mood swings.

And so, as you can imagine, the relationship between Lord Byron and Ada’s mother, Lady Anne Isabella, didn’t last long. Just weeks after Ada was born, they were splitting up. From this moment on, everything shifted in Ada’s life. Instead of teaching poetry and art, Ada’s mother instead focused all of her daughter’s studies on science, philosophy, and mathematics. All with the goal of ensuring that Ada would never turn out like her father.

Her mother’s strategy worked. Ada was tutored in the mathematics and sciences of the day and flourished. At the age of 17, she met Charles Babbage, and a decade long friendship began. Despite the huge age difference, Babbage and Lovelace were equals in intellectual might. Babbage is mentoring Ada, and in turn, Ada starts learning about Babbage’s Difference Engine and Analytical Engine, and she’s entranced.

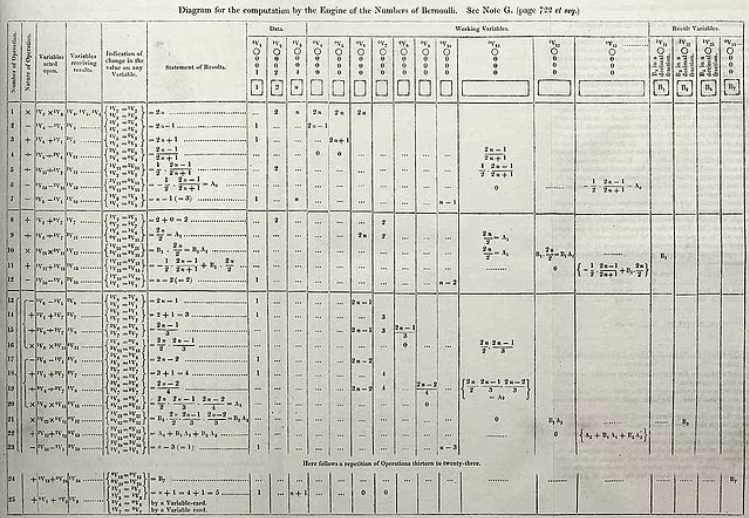

At one point, Babbage came to Ada asking her to translate an article on his Analytical Engine written by an Italian engineer. Ada went beyond translating the text. She added her own thoughts about the machine and its implications for the future. Let me tell you, she didn’t hold back.

New Realization

Ada understood at a very young age that these computers Babbage had invented could do more than just work with numbers. They could manipulate any data that numbers could represent. The possibilities were endless. Ada saw a glimpse into the future with this Analytical Engine, with possibilities like:

- Being able to create complex and elaborate pieces of music with any degree of complexity.

- Being able to manipulate symbols for complex computation, not just calculation.

- Being able to use a computer not just for computation but also for graphical drawings.

In short, Ada was a prophet for the coming computer age that would soon dominate our entire society. Except she was over 100 years too early. At the time, Ada’s published work disappeared into the vacuum of history, and so did Ada.

A Century Down the Road

Fast-forward to 100 years later, Ada Lovelace’s contributions to computer science and Babbage’s foundation of modern computing are finally coming to light. Ada’s ideas about the potential of computer programming were made public when her notes on Babbage’s invention were republished by B.V. Bowden in the book Faster Than Thought: A Symposium on Digital Computing Machines in 1953. Since that publishing, Ada is now known worldwide as the first computer programmer, and the United States Department of Defense even named a computer language after her, called Ada.

All the groundwork that Charles Babbage laid in the 1800s also came to fruition in the form of the first concept for the modern computer by Alan Turing in 1936. Did Turing base his invention on the work Babbage created a century earlier? Who knows. What he did succeed in was creating a machine controlled by a program. It worked by providing coded instructions that were then processed, stored, and outputted. All of these systems, the memory, the processing capabilities, the input of data, and the output of results were all accomplished a century early by Babbage.

Many Computer Firsts

The rest of the history of computer development seems to rush by in a blur. The first electronic programmable computer, called the Colossus, was invented in 1943 and helped British code breakers to read encrypted German messages during World War 2. And from there, we have the invention of the first digital computer in 1946. A computer called ENIAC took up over 1,800 square feet, packed in 18,000 vacuum tubes, and weighed in at 50 tons. By 1974, we had the first personal computer that could be purchased by the masses, the Altair 8800. And today, we’ve got computers that we can strap to our wrists; the progress is just mindblowing.

Laying the Foundations

This isn’t just a history lesson in this blog; there’s a reminder. It’s a reminder about the importance of foundations and how most great inventions are built on top of them. Without Charles Babbage’s early success with a mechanical computational machine or Ada Lovelace’s success with understanding the possibilities of computer programming, we would never be where we are today. And this is how progress in engineering works on a large scale, even beyond computers.

All of the work that we do, day in and day out, is done because we stand on the shoulders of creators that came before us. But we’re not just drawing the same old circuits or recreating the same parts from scratch. We’re building on what we or someone else created in the past that can be trusted and relied upon. In many ways, the success that we experience today is only possible because of the work is done in the past. Whether that’s through your own engineering efforts or from inventors and visionaries like Charles Babbage and Ada Lovelace. These two saw the future clearly and pointed us in a new direction. And without them, our digital computer age would never be as it is today.

Autodesk Fusion helps you use the technologies of the past to design the future. Check out Fusion today!