产品设计与制造

不要坐等其成。制造。

新技术和流程正在创造新的可能。探索 Autodesk University 提供的这些资源,了解详细信息。

剧场演讲

通过无人机构建美好未来

Image

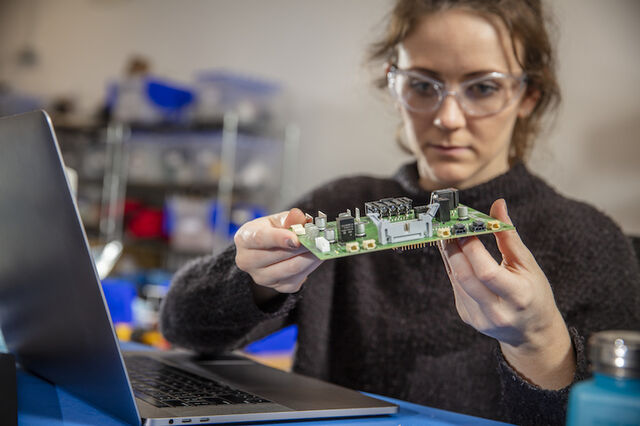

课程

通过产品管理组织成功实现数字化转型

Image

播放列表

产品开发与制造:提高创新能力

Image

课程

模型状态快速入门

Image

文章

数字主题:互联制造方法

Image

文章

AI、数字孪生和产品设计流程的未来发展

Image