The digital transformation of the architecture, engineering, and construction (AEC) industry has come a long way. We’ve digitized our schedules, estimates, and designs. We now track materials in real-time, manage submittals through cloud platforms, and coordinate complex building systems inside three-dimensional models. However, there’s one critical data layer that most projects still handle the same way they did 30 years ago: the ground beneath our feet.

Subsurface data, the critical information about soil types, rock formations, groundwater levels, and geotechnical test results that determine what we can build and how, is still exchanged overwhelmingly in static reports as PDFs and/or spreadsheets. Having spent years working at the intersection of geotechnical data and infrastructure design, I keep seeing the same pattern: every other data stream in construction has found its way into connected digital environments. Nonetheless, geotechnical information remains locked in static documents, disconnected databases, and paper field logs. For an industry investing heavily in digital tools, this is a blind spot worth solving.

AEC ranks among the least digitized industries globally, spending less than one percent of revenues on IT, according to McKinsey, and even within construction’s growing digital ecosystem, subsurface data stands out as a laggard. Consider the typical workflow: a geotechnical consultant conducts a site investigation, produces a multi-hundred-page PDF report, and emails it to the design team. Engineers then manually extract what they need, re-entering values into their own spreadsheets and models. Every handoff introduces the risk of transcription errors, data security, version conflicts, and lost context.

This matters more than most teams realize. Research from FMI found that 96% of data captured in engineering and construction goes unused. In my experience, geotechnical data is among the most underused. It’s not that the information doesn’t exist, it’s that it’s trapped in specialist reports, inaccessible to the project managers and designers who need it to make informed decisions. The data is there but the visibility isn’t.

Unforeseen ground conditions remain one of the most persistent sources of cost overruns and schedule delays in infrastructure projects. Nine out of ten construction projects experience cost overruns, averaging 28%, and ground-related surprises are a leading contributor. The U.S. National Research Council recommended that at least three percent of total project cost be allocated to site investigation, yet actual spending often falls below 0.3%, clearly an order of magnitude gap between what experts recommend and what the industry actually invests.

History offers sobering examples. Boston’s Central Artery Tunnel project, better known as the Big Dig, was estimated at $2.8 billion but ultimately cost $14.78 billion, with subsurface unknowns in reclaimed land identified as a major factor. London’s Crossrail project took a different approach: by investing in more than 1,400 boreholes and building a comprehensive ground model before construction began, the team minimized unforeseen ground issues across 42 kms of complex tunnelling. The contrast is instructive. Better ground data does not just reduce risk, it fundamentally changes how confidently a project can move forward.

But cost overruns and delays are not the only price paid for inadequate ground data. When subsurface conditions are poorly understood, engineers compensate through conservative design — adding material safety margins to foundations, piling, and earthworks to account for what isn't known. That means more concrete, more steel, and more carbon. In an industry already responsible for 39% of global CO₂ emissions, with concrete production alone accounting for 8% of all emissions, the systematic over-engineering of underground structures is a largely invisible but significant contributor. Improving ground data quality isn't just a cost management strategy — it is a direct lever on sustainability outcomes that rarely gets framed in this manner.

And then there is the most serious cost of all: human life. When Hurricane Katrina struck New Orleans in 2005, the levee failures that killed nearly 1,800 people were not simply a consequence of the storm — independent investigations found that the soft clay and peat conditions beneath the flood defences had been identified in prior site investigations but were never adequately incorporated into the design. In Oso, Washington, a slope collapse killed 43 people in 2014 on a hillside that prior geotechnical studies had already flagged as unstable. In both cases, the data existed. What was missing was the system to connect it to the people who needed it. The ground doesn't lie — but when geotechnical data is fragmented and siloed, its warnings can go unheard until it is too late.

The shift to digital subsurface data is not simply about converting paper-captured field observations, later manually re-typed into databases, auto-generating long static reports communicated as PDFs. It’s about creating a continuous data pipeline from the field to the design environment, with validation and traceability at every step. What I’ve seen work on projects around the world typically involves three connected capabilities:

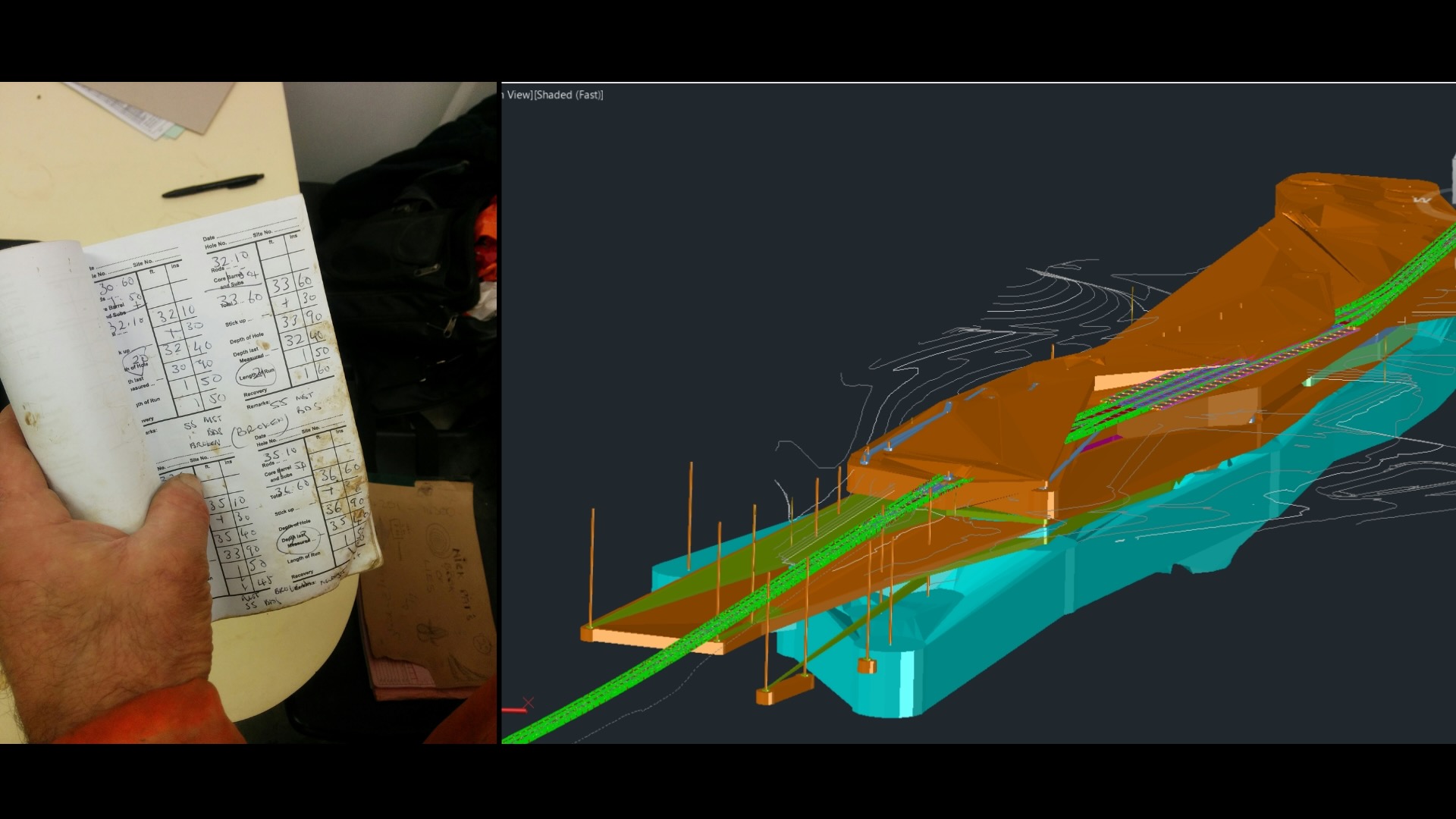

Instead of paper forms filled by drillers and later re-entered by office staff, digital field tools enforce standard-compliant data entry at the point of collection. Ground descriptions are generated automatically from entered soil properties, reducing interpretation errors. Samples can be tracked with QR codes for chain-of-custody traceability. The result is cleaner data, captured once, transmitted with traceability with no transcription step.

Rather than scattering geotechnical data across project folders, email attachments, and individual laptops, a single, structured, securely-shared database becomes the authoritative source. Multiple team members can access the same data simultaneously. Automated validation catches errors before they propagate downstream. And critically, historical data from previous projects on the same site or in the same region becomes searchable and reusable – rather than buried in archives nobody can find.

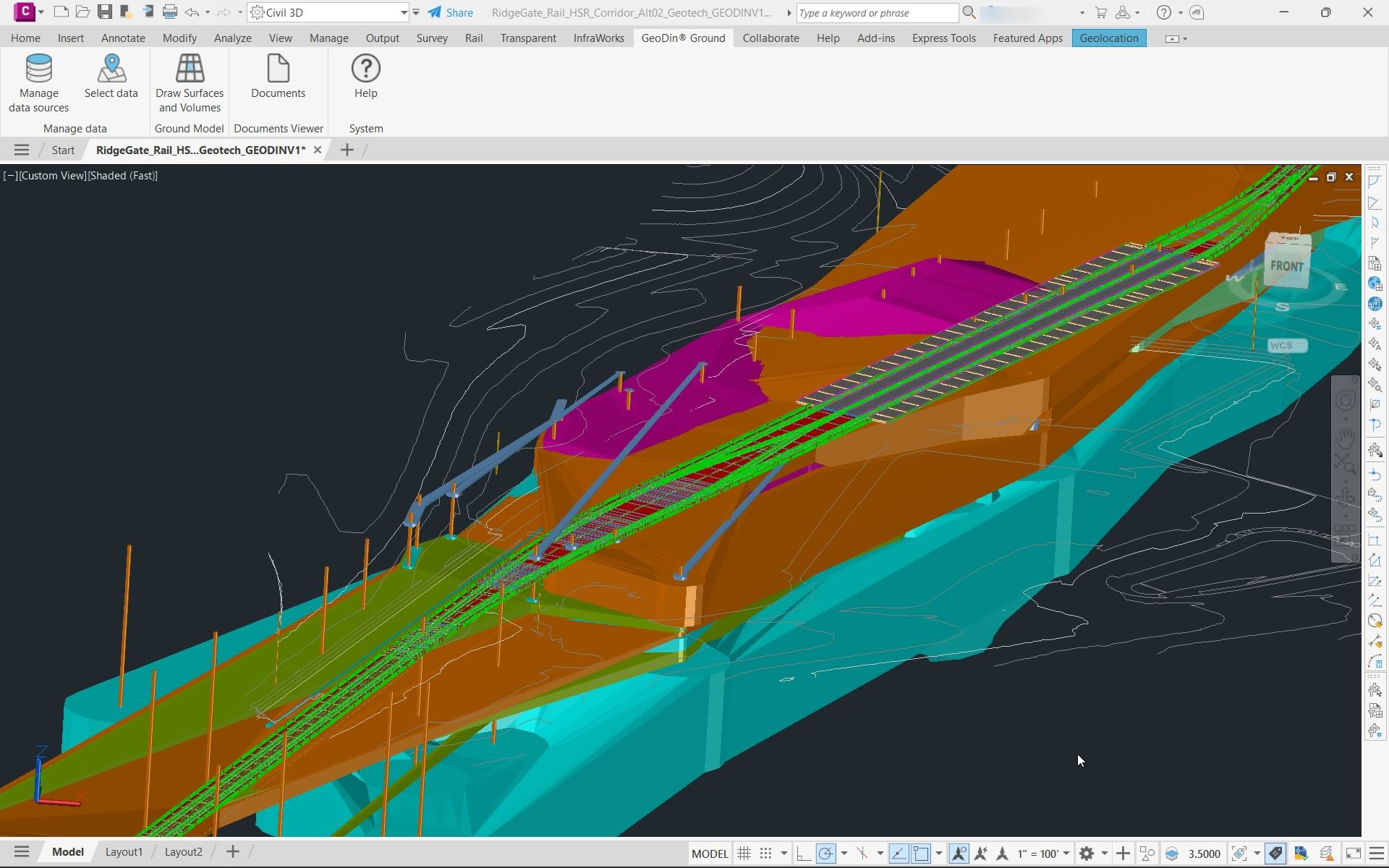

This is where the real value compounds immensely. When subsurface data flows directly into civil design and BIM environments, engineers can visualize borehole data as 3D models, generate ground surfaces, effectively detect potential challenges, and calculate earthwork volumes without manual re-entry. It makes the ground transparent for infrastructure designers, allowing them to make informed decisions and avoid over-engineering, costly delays, and expensive rework. It also eliminates one of the most error-prone handoffs in the infrastructure design process.

Not every project requires the same level of digital maturity for subsurface data. But if any of these situations sound familiar, the ground beneath your project deserves more attention:

When the investigation report is a static document, any new information from additional boreholes, changed water table readings, or updated lab results requires a whole new report. Design teams end up working from stale data without realizing it.

When geotechnical engineers, structural designers, and construction managers each extract and reformat ground data independently, discrepancies are inevitable. A single source of truth eliminates conflicting assumptions.

If understanding what’s beneath your site requires tracking down a specific person or hunting through archived reports, the data isn’t accessible enough. Project-wide visibility into subsurface conditions should be as routine as checking the latest drawing revision.

The AEC digital transformation has progressed from above ground downward: first scheduling, later estimating, followed by design coordination, then field management. The subsurface data is clearly the next natural frontier.

As projects become more complex, as we face shortage expertise compared to accelerating workloads, and as owners demand greater certainty on cost and schedule, the ability to integrate ground data into the digital project environment will shift from a competitive advantage to a baseline expectation. In an era where sustainability is of critical importance, overengineering the design to compensate for uncertainty, and therefore increasing the carbon footprint of an industry that is already one of the leading sources of carbon emissions, can simply not be the approach.

The technology to make this happen already exists. Digital field collection tools centralized geotechnical databases, rich visualisation and analysis tools, and design-environment integrations are all available and proven on projects around the world. What is still catching up is the mindset: the recognition that ground data is not a specialist concern to be handled in isolation, but a project-wide asset that belongs in the same connected digital ecosystem as every other data stream.

We are at a moment where the industry is actively breaking down silos – facilitating easier data integrations and fostering collaboration between teams that have historically worked in isolation. The valuable insights that geotechnical teams generate every day are starting to reach the designers and project managers who need them most. That shift is already underway on forward-thinking projects. The question for every team is whether they will bring the ground into their digital environment now, while it is still a differentiator, or later, creating huge risks of unexpected costs and delays or even catastrophic failures.